Brief

More and more companies these days rely on Net Promoter® scores to gauge the quality of their relationships with customers (see below, “Calculating your Net Promoter score”). But are these scores accurate and reliable?

Not always. The chief executive of one business-to-business (B2B) company, for instance, hired a group of market research firms to gather data about promoters and detractors. The research firms carpet bombed the company’s customer organizations with surveys. But the resulting scores proved volatile and undependable. Response rates were low, which increased random variation from one period to the next. And no one could tell exactly which individuals filled out the surveys. Indeed, subsequent analysis showed that few senior decision makers or influencers ever took part. So the scores didn’t accurately reflect key individuals’ attitudes and actions.

Similar issues have cropped up at other companies, those that sell to consumers as well as those that sell to businesses. In the most serious cases, problems with the survey data lead people to mistrust the entire Net Promoter approach, thereby undermining its value.

Yet many Net Promoter practitioners have learned to compile accurate, trustworthy scores week in and week out. They generate high response rates from the right customers. They eliminate the many sources of bias that can contaminate the data. The precision and granularity of their scores enable them to learn more—and learn more quickly—about what their customers are thinking and feeling, so they can take appropriate action.

In this article we will explore how they accomplish all these objectives.

The fundamentals: Frequency and consistency

Accurate Net Promoter scores depend on a constant flow of data. Many leading companies survey a sample of their customers every week. Nearly all issue reports every week or every month. Frequent surveys enable you to monitor the scores for unexplained variation. They also allow you to test new approaches and tactics to see if these changes improve outcomes.

Consistency is equally vital. A restaurant chain, for example, wanted to assess the loyalty of customers at a potential acquisition target. The chain first had a market researcher ask customers leaving the target company’s restaurants how likely they were to recommend the restaurant to a friend or colleague. Measured this way, the target’s Net Promoter score was almost 40. Later, however, a due-diligence team polled a broader sample of the restaurants’ customers via brief email surveys and calculated a score of negative 39. The moral: Different survey methods can produce radically different results—so it’s essential to find the one method that works best for you and stick to it. The consistency principle applies even to seemingly trivial variations in methodologies, such as the wording of survey questions. Every change needs to be tested first to see if it has any effect on responses.

The only way to do that is to get reliable feedback from customers, says Rob Markey, global practice leader of Bain's Customer Strategy & Marketing practice, in this short video.

High response rates from the right customers

The statistical validity of any survey score always depends partly on response rates; the higher the rate, the greater the accuracy. At Enterprise, the survey completion rate for customers who answer the phone exceeds 95%. At Allianz, response rates for both consumer and business-to- business sectors are around 80%. In our experience, anything less than a 40% response rate for business-to- consumer (B2C) and 60% for B2B enterprises indicates a red flag. One likely result of low rates is responder bias, which we will discuss in a moment.

What counts most, of course, is high response rates from your core or target customers—those who are most profitable and whom you would most like to become promoters. Retail banks, for example, find it helpful to survey their customers by segment, so that the responses of their most profitable clients aren’t drowned out by those who are only marginally profitable.

Identifying the right customers is also important in B2B situations, but it can be even more challenging. Consider the situation of Philips Healthcare, which sells radiology equipment and services to hospitals and clinics. Relevant customer contacts include hospital CEOs and CFOs, heads of radiology, respected physicians, nurses and the technicians who operate the machines. At first, Philips’s Net Promoter researchers didn’t do enough up-front work to identify the right decision makers, influencers and users to survey. The numbers they reported to corporate each month were based on small samples from mixes of customers, resulting in volatile scores. Rather than pulling back from NPS, however, the Philips leadership team stepped up the effort to design a reliable measurement system, and by the second year had built accurate customer lists by business and geographical market.

Freedom from bias

Bias can creep into survey data in all sorts of ways. Consumers may avoid giving negative scores out of embarrassment, particularly when surveyed face to face or on the phone. In B2B situations, smaller customers may avoid negative reviews when surveyed by a large or critically important vendor, for fear of being cut off. To coax out candid feedback, leading NPS practitioners find ways to offer each customer appropriate levels of confidentiality. In B2B settings, for instance, companies can maintain transparency by reporting average scores for an account while keeping individual ratings confidential.

More serious problems arise when people try to game the survey system. Sales and service representatives at auto dealerships, for instance, are notorious for begging for top-box scores. Employees of other companies may engage in shenanigans, such as altering the phone numbers of dissatisfied customers so the surveyors can’t reach them. One clever call center manager determined that, rather than selecting customers for feedback from a pool comprising all calls, the pool would include only customers whose cases were settled favorably for the customer. So if a customer’s claim required several calls to settle, only the last call would generate feedback. If the customer’s claim was denied, he or she was never surveyed. Companies with robust Net Promoter Systems pay close attention to potential gaming of this sort. At Enterprise, gaming the system is called speeding and is ground for dismissal.

A major source of inaccurate scores—one that has nothing to do with unethical behavior—is what we call responder bias. Responder bias occurs whenever the individuals who respond to a survey differ in some significant way from the overall population being surveyed. For instance, imagine a company with a 20% response rate. Its survey indicates that its NPS is 50 (60% promoters minus 10% detractors). Now imagine that the firm studies the behaviors of nonresponders— behaviors such as repeat purchases and increased purchasing over time, among others—and determines that the mix of that group is 10% promoter, 40% passive, and 50% detractor. In other words, the 80% of customers who ignored the survey had an NPS of negative 40. So the true NPS for the company—the weighted average of the two groups—is negative 22, not 50. That’s responder bias.

If you can’t do the necessary research, consider scoring all nonresponders as detractors (probably not too far off in business-to-business settings) or as a 50-50 mix of passives and detractors (a reasonable estimate for many consumer businesses).

Precise, granular scores

Companies don’t measure profit only at the corporate level; they break it down by business, product line, geographic region, plant, store and so on. Granular performance measurements enable individuals and small teams to make better decisions and to be held responsible for the results. Strong Net Promoter metrics require the same kind of precision and granularity. At Enterprise, for instance, the crucial breakthrough was pushing the measurement of customer loyalty down to the level of individual branches. The specificity of the data both allowed and encouraged employees to be much more responsive to customer feedback.

In most companies granular measurement isn’t easy. Many different departments may influence a customer’s overall experience and therefore his or her loyalty. For example, an insurance client interacts with the agent, with billing, with claims, and maybe even with underwriting. At Intuit, the financial software company, senior managers realized early on that accurate evaluation of its customers’ experience had to include customer service, tech support, software design, sales and marketing and engineering. The trick is to distinguish between a customer’s satisfaction with a specific interaction, such as a call to customer service, and his or her loyalty to the overall relationship. That’s why so many companies rely on both bottom-up and top-down surveys (see below, “Bottom-up and top-down scores”).

The key: Validate with behaviors

In the end, there is only one sure way to check whether your system has effectively defused the land mines of low response rates, bias and so on: You must regularly validate the link between individual customers’ scores and those customers’ behavior over time. Ongoing analysis of retention, purchasing patterns, feedback and referrals can confirm the integrity of your feedback process. Establishing links between Net Promoter scores and business results will indicate that the scores are reliable.

Charles Schwab, for example, spent a considerable amount of time testing its metric to establish the value and integrity of the process. Schwab’s researchers learned that shorter surveys brought higher response rates. They found that changes in the presentation of survey questions needed to be rigorously tested to ensure that they didn’t introduce variation into the score. Over time, Schwab was able to develop a robust, reliable metric, customized for its needs. The team could demonstrate that the top 10% of branches, as measured by NPS, grew 30% faster, on average, than the branch network as a whole. It also found that about half of new Schwab clients listed referrals or recommendations as a primary reason for coming to Schwab. Evidence like that solidified support for NPS across the organization.

Leading companies such as Schwab understand that it is the behaviors, not the scores, that define promoters, passives and detractors. It is the behaviors, not the scores, that drive growth. NPS is a valuable tool only when the scores accurately reflect the strength or weakness of relationships. If organizations take seriously the goal of turning customers into promoters, they must take equally seriously the need to measure their success.

Fred Reichheld and Rob Markey are authors of the bestseller The Ultimate Question 2.0: How Net Promoter Companies Thrive in a Customer-Driven World. Markey is a partner and director in Bain & Company’s New York office and leads the firm’s Global Customer Strategy and Marketing practice. Reichheld is a Fellow at Bain & Company. He is the bestselling author of three other books on loyalty published by Harvard Business Review Press, including The Loyalty Effect, Loyalty Rules! and The Ultimate Question, as well as numerous articles published in Harvard Business Review.

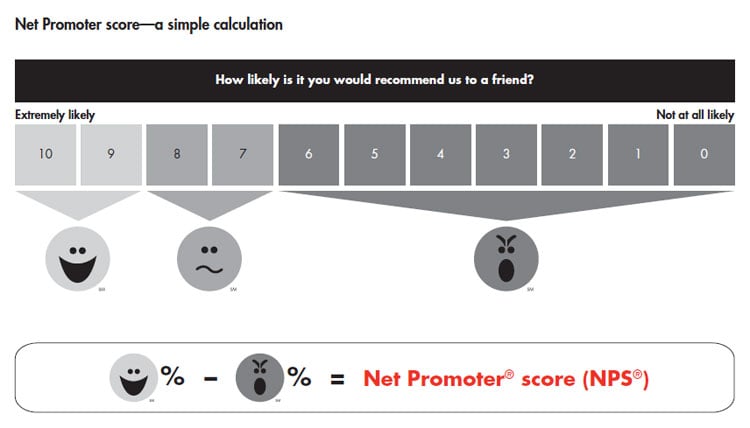

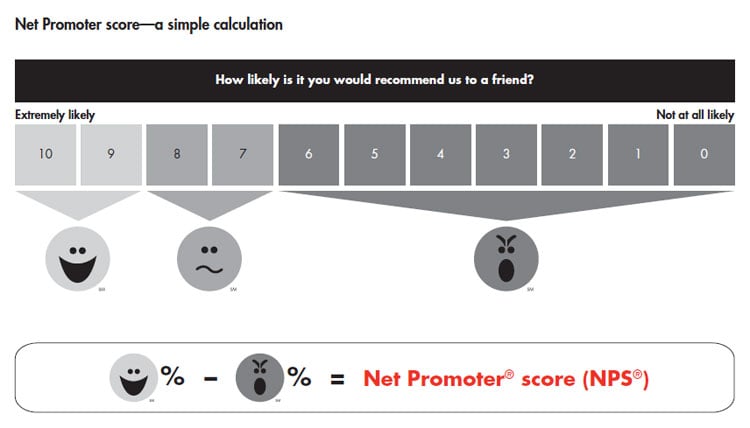

Calculating your Net Promoter score

The Net Promoter System begins with scores calculated from short, frequent customer surveys. Companies typically ask this question: On a zero-to-10 scale, how likely is it that you would recommend this company [or this product] to a friend or colleague? A follow-up question asks the primary reason for the score. Ratings of nine or 10 indicate promoters; seven and eight, passives; and zero through six, detractors. The Net Promoter score is simply the percentage of promoters minus the percentage of detractors (see chart).

Bottom-up and top-down scores

Top-down Net Promoter scores, based on surveys of customers’ attitudes toward several competitors in an industry, are designed primarily to show a company’s relative performance and identify aggregate patterns rather than to generate diagnostic insights for individual customers. Companies generally measure these scores through a double-blind process in which the respondent doesn’t know who is sponsoring the survey and the company can’t trace back responses to any individual respondent. Bottom-up surveys, on the other hand, are openly sponsored by the company, and the company keeps track of who responds. They often take place after particular transactions. Enterprise, for instance, surveys a sample of its customers within a few days of the end of their rentals. In B2B relationships or some consumer relationships with continuous interactions, a bottom-up survey might be triggered by the end of a quarter or on an anniversary date. Some people call these periodic surveys “relationship” or “relational” NPS feedback.

Both are essential. For example, a company might ask a sample of its customers just three questions immediately after a phone interaction: How likely would you be to recommend us to a friend or colleague? To what extent did this recent phone interaction increase or decrease your likelihood to recommend us? and Why? Tracking Net Promoter scores at each interaction enables managers to spot trends or emerging problems; it also helps them identify which departments and individual reps are doing the best job of turning customers into promoters. Perhaps most important, it provides additional input for coaching and decision making by customer-facing employees and groups. On the top-down front, the company can continue to sample its broader customer base, asking the “likelihood to recommend” question and probing why, sometimes supplemented with a few other questions. Ideally, the combination of data will allow managers to summarize results by customer segment, customer profitability and type of inquiry or service problem. It will also help them understand which dimensions of the customer experience warrant investment.

Net Promoter®, Net Promoter System®, Net Promoter Score® and NPS® are registered trademarks of Bain & Company, Inc., Fred Reichheld and Satmetrix Systems, Inc.